A brief tl;dr before we dive into this project that I set for myself before immediately descending into madness:

I used Twitter’s new system of displaying views on every tweet to compare a sample of Twitter Blue users to a sample of users who didn’t pay real money for a little badge. Those who aren’t paying for Twitter Blue get more views on their tweets, probably because they make good tweets rather than doing crypto scams.

In this post, I am going to be as detailed as possible in my methodology so you can replicate my work to address the potential flaws and biases, if you wish. You can also download my data set here if you’d like to reanalyse, or run more analyses on the data because there’s probably some more interesting things in there about the difference between normal Twitter users and the Blue weirdos, which I couldn’t be bothered to look at.

Introduction

Since buying Twitter, Elon Musk has coped hard with his divorce by making changes. This has included flooding the platform with Nazis, but more pertinent to this research project, introducing Twitter Blue, the special system for special big boys where you get a little blue tick, and you’re promised your posts will be boosted. He has also added a wee hit counter to every single tweet, which renders muscle memory of how to like or retweet entirely useless, and gives the entire app the look-and-feel of a Geocities website.

The view counter has been criticised as being fake, but for the purpose of this project that I set myself to, I am going to sincerely pretend it gives a true and accurate read of the number of people who have seen a tweet. It probably is somewhat inflated but I suspect it gives a reasonable ballpark.

Given the divorced saddo’s insistence that his magic medicine Twitter Blue will help increase views, I set out to use his view counter to address the research question:

Do Twitter Blue users receive more views on their tweets?

Method

Sampling Twitter accounts

By far the most challenging element of this task I had set myself was finding Twitter Blue users as the test group. The big problem here is I usually immediately block conspiracy theorists, Tesla fanboys, or crypto scams. However, my block list is long and lengthy, and would involve also wading through a bunch of transphobes and misogynists, so I had to use different methods.

I considered tweeting something mildly critical of Elon Musk to summon them to my mentions, but decided against this approach as it would skew the Twitter Blue sample into those who were feeling particularly lonely and in need of attention that afternoon. Plus, my account would probably get banned before I had gathered sufficient data. In the end, I looked at the replies under an Elon Musk tweet, and selected the first 20 Twitter Blue accounts that I saw replying. They may appear in a different order to you, because Twitter is an algorithmic hellscape.

I’d initially intended to use a sample size of 50 Twitter Blue users to give myself a nice big sample size, but I entirely lost the will to live at 20 because their accounts are so terrible to have to look at, so this analysis is based on 20 Twitter Blue users. I cannot emphasise enough how thoroughly depressing it was to look at even 20 accounts. I’ve never seen so many crypto advocates, cranks and NFT profile pictures at once.

The control group consists of 20 users with no blue ticks. These were identified by opening up my timeline and selecting the first 20 accounts whose tweets I saw. This included accounts retweeted into my timeline. I have my timeline set to chronological, if that helps you to replicate my methods.

“Legacy” blue ticks – the ones who earned their ticks by being notable – were excluded entirely from this analysis because I’m not sure if that does anything to visibility with the algorithm tweaks that Space Karen has been making.

Sampling tweets

To get a fair measure of views without skewing based on one tweet that did numbers, I used the 10 most recent timeline tweets that each of the 40 accounts included in the analysis had made. Replies were excluded from the analysis, as were retweets.

If an account did not have 10 tweets with the view counter visible, i.e. they had not tweeted sufficiently since the view counter had come into effect, they were excluded from the analysis. Four accounts were excluded from the Blue sample, and three from the control sample under these criteria. To keep the total number of included accounts in the analysis at 20, if an account was excluded, another account was selected using the criteria above.

View count metric

The view count as presented on each of the 10 tweets for each account was used, under the assumption that it was probably at least somewhat vaguely related to the number of times it had been seen.

A mean of all views of all of the 10 tweets per account was calculated.

Results

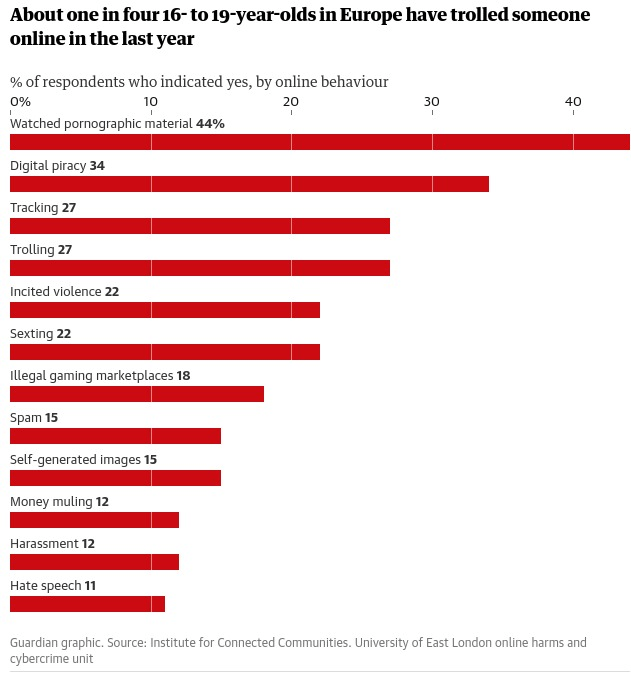

Demographics

Accounts were not an exact like-for-like comparison. The Twitter Blue accounts had more followers on average than the control sample; and the control sample had, on average, an older account.

| Twitter Blue | Control | |

| Mean followers | 14099.2 SD = 19526.2 | 5801.1 SD = 5632.4 |

| Mean account age (months) | 54.4 SD = 55.6 | 123 SD = 55.4 |

Tweet views

The mean views of tweets from Twitter Blue accounts was 841.4 (SD = 774.4). The mean views of tweets from the control accounts was 1875.4 (SD = 1780).

Now, that’s obviously a pretty big difference. Despite having less than half the amount of followers the control group had more than twice as many views per tweet. I decided to go just a little bit harder, though. An independent samples t-test was used to assess whether this difference was significant. It was: t(38) = 2.38, p = .02

There was a statistically significant difference in number of views on tweets: users who had not decided to pay for Twitter Blue received significantly more views.

Discussion

Twitter Blue accounts just aren’t receiving the views that organic accounts are, despite the algorithmic boosting that they are receiving. This is probably because they’re just making bad posts that nobody actually wants to see. They’re not even getting ratio’ed for their bad takes, because their bad takes are so terminally pedestrian. It is possible that the difference is so marked between the Twitter Blue accounts and the control group because I have exceptional taste in curating my timeline, but I really cannot emphasise enough how bad the Blue tweets were. It was an utter morass of crypto scams peppered with weird anti-vax conspiracy therories. Nobody wants to see that, not even other Twitter Blue subscribers.

Future directions

I have supplied by data set here. There’s a few interesting bits and bobs in there that I noticed while inputting it but didn’t bother analysing because it’s a small data set and also my girlfriend wanted to go to the pub, which was a better life choice than the one I’d made. If you have time on your hands, perhaps you’d like to have a go at addressing one of these questions:

- Are there indicators of organic reach within the control sample? The numbers in general among the control group felt a little more indicative of consistent reach peppered occasionally with a tweet that did numbers. Maybe have a go at smoothing and seeing if that’s the case.

- I didn’t adjust for age of the account or follower count. Does this make a difference? Try playing around with that.

- If you really, really have a load of extra time and a high tolerance for seeing the most tedious posts on the internet, why not have a go at running this analysis with a larger sample size and sampling which is not curtailed by losing the will to live completely?

Thank you for reading this labour of spite. I cannot believe I did this to myself.

_

Enjoyed what you read? Consider becoming a Patron or leave a tip